Researchers at Carnegie-Mellon University have created an “Insider Threat Ontology” as a framework for knowledge representation and sharing of malicious insider cases. The ontology features rich constructs regarding people who take malicious actions to compromise or exploit cyber assets. However, modeling end-user error was outside the scope of the CMU work. The current work enumerates extensions to the CMU ontology to model end-user and system administrator error. Specifically, additions to the inheritance lattice of actors is presented and additional types of actions pertaining to human error are described. The article concludes with an example of the use of these extensions to model a case drawn from the Privacy Rights Clearinghouse database of data breaches.

1. Introduction

A truly alarming percentage of cybersecurity problems occur because of human mediation leading to the compromise or circumvention of technological safeguards. A variety of people within an organization can play a role in an attack. Malicious insiders who are either system administrators or end users are responsible for a truly significant amount of havoc and loss. Additionally, however, improperly trained or insufficiently vigilant system administrators commit a range of errors. End users with no malicious intent are also a significant source of error that leads to damaging and ultimately unnecessary loss.

The malicious insider problem has long been recognized as a daunting challenge in cybersecurity. Disgruntled employees who leave organizations with sensitive data, and current employees who perceive the chance to profit by selling secrets are just two of the many forms of malicious insider behavior. Accordingly, researchers at Carnegie Mellon University’s Software Engineering Institute have created a highly articulated ontology [1] to standardize the vocabulary and to map relationships among entities for the formal description of malicious insider attacks. The ontology provides a well-thought out knowledge representation scheme that can be used to share information in a standardized form and to build reasoning systems pertaining to the domain.

The CMU ontology is well-designed and comprehensive with regard to knowledge representation of malicious insider actions, events, assets, and information. However, it was not intended to include the modeling and representation of knowledge pertaining to errors made by people. The purpose of this paper is to propose extensions to the CMU Malicious Insider Ontology to enable explicit modeling of human error in cybersecurity breaches.

The remainder of this paper contains a description of literature pertaining to human error in cybersecurity breaches, and a set of extensions to CMU’s ontology that would permit modeling of human error-mediated events. After a discussion of the CMU model, the proposed extensions are presented and discussed. Use of the extensions is illustrated by formally representing a narrative pertaining to a real-world data breach documented in the Privacy Rights ClearingHouse [17] database, a database that documents 5,200 data breaches made public since 2005. The paper concludes with some lessons drawn from the exercise.

2. Human Error in Cybersecurity Vulnerabilities

Kharif [18] reports that 2016 was the worst year in history for data breaches. The Identify Theft Resource Center [19] recorded in excess of 1,000 breaches in 2016, exhibiting an increase of 40% from 2015. According to a report by IBM, 95% of all security breaches are mediated in some degree by human beings [2]. Daugherty [21] summarizes BakerHostetler’s 2015 and 2016 Data Security Incident Response Reports. The 2015 report reads that 37% of incidents involved human actions or errors. This characterization was of systems administrator error and end-user error. Daugherty states that successful phishing/malware attacks contributed to 25% of data breaches. His summary suggests human error playing a role in 62% of all incidents. BakerHostetler’s statistics were based upon a smaller sample than IBM’s data, but the message from both is the same – human error is an extremely important proximate cause of security breaches.

Howarth [3] describes a range of human errors often involving people inside organizations who do dangerous things either accidentally or deliberately. Major categories of human error include the inadvertent exposure of sensitive data, creating conditions that allow the introduction of malware into mission-critical systems, and creating conditions that allow theft of intellectual property or sensitive information.

Howarth concludes that organizations that implement strong technological security procedures still often pay insufficient attention to human sources of vulnerability, including errors made by system administrators. He strongly advocates for enhanced security training to decrease human error. Armerding [8] cites a report that indicates that 56% of workers who use the Internet on their jobs receive no security training at all. While malicious insiders remain a significant threat to cybersecurity, it is clear that enormous problems arise from people with no malicious intent performing dangerous behaviors or being tricked into compromising sensitive information.

Social Engineering attacks are those that involve tricking people into making errors. One of the most common forms of social engineering attacks is phishing, and surprisingly, phishing attacks still frequently succeed. Modern phishing attacks are much more sophisticated than in earlier times and typically aim to install malware. Spear phishing attacks are highly sophisticated, being based upon emails that appear to be from trusted sources or businesses with which the target of the attack has interactions. Phishing attacks are so common and so successful that a world-wide working group, antiphishing.org [20], has formed to foster research, data exchange and public awareness of the problem.

A surprisingly common problem arises from people simply moving sensitive data around via unsafe technologies. People send documents to the wrong recipient through email, carry sensitive information on jump drives and place sensitive documents on insecure file sharing sites. Verizon reports that 63% of confirmed data breaches were facilitated by the use of legitimate passwords that were weak, default or stolen. Point of sale attacks frequently occur and they are often caused by people who use point of sale machines for other uses including web surfing and email [7]. Verizon estimates that only 3% of phishing attacks are reported by the targets of the attacks. A quick scan of the author’s spam file reveals 5 emails with suspicious attachments out of the 215 emails currently in the folder.

System Administrators are responsible for significant problems as well. Barrett, Chen, and Maglio [23] discuss the increasing challenges of monitoring and maintaining increasingly complex systems, and the costs associated with deficient processes. Fulp et al [24] describe errors in systems configuration such as firewall maintenance, patch management, and failures of signature-based intrusion detection, as critical problems. Other system administer-mediated problems include lack of access control management as end users change roles within an organization or when they leave organizations. Seemingly simple-to-rectify problems such as ineffective patch-management become more significant as system administrators are called upon to manage more and larger systems.

3. The CMU Malicious Insider Ontology

The CMU Malicious Insider Ontology was created to provide a standard means to formally represent cases of malicious activity inside organizations [1]. Their concern is to model people who were formerly or are currently associated with an organization who had privileged access that they deliberately used in a fashion that negatively impacted the organization. Insider status pertained to anyone with privileged access including contractors and people working for trusted business partners of the victim organization.

The authors of the CMU ontology quote Gruber [11] who described an ontology as a “coherent set of representational terms, together with textual and formal definitions that embody a set of representational design choices.” In other words, an ontology provides a standardized vocabulary for objects, actions and relationships among its constituent parts that affords knowledge representation in a sharable and machine-processable form.

The authors of the CMU Malicious Insider Ontology chose to utilize Web Ontology Language 2 (OWL 2) [12] constructs to implement the ontology because it is a standard of the World Wide Web Consortium and has many support tools. They performed a round of concept mapping [13] to assess the scope of the project. They identified the following five base classes:

- Actor

- Action

- Event

- Asset

- Information

They chose to model Action and Event as separate classes, making the distinction that actions are observable occurrences and events are inferred to have occurred because of actions. Additionally, an event might be comprised of multiple actions. For instance, their taxonomy includes high-level concrete actions such as DigitalAction, FinancialTransactionAction, and JobChangeAction, and modifier actions such as SuspiciousAction (an activity that might raise concerns about malicious behavior). Modifier actions are used to qualify the concrete actions. For instance, an EmailAction (a type of DigitalActon) might also be a SuspiciousAction. The eleven Event classes include DataExfiltrationEvent (unauthorized removal of data from a computer), and JobOfferEvent (getting an offer to work somewhere else). The ontology has extensive representations pertaining to people leaving or changing jobs, since such events are often the starting point for a variety of malicious activities.

The Asset and Information classes comprehensively enumerate a vocabulary for their respective domains. Assets are characterized as the targets of malicious actions. The CMU ontology includes a temporal component for the representation of a series of actions and events that occur over time, and they incorporate Peterson’s [14] SpaceTime Ontology to model events occurring over time.

4. Extensions for End-user Error

The CMU ontology features a total of 124 classes. It has wide-ranging modeling constructs for Asset and Information that are applicable to any cybersecurity incident. The Actor class contains two broad subtypes: Organization and Person. The Action, and Event classes appear to be focused on malicious behaviors by insiders and are not meant to provide significant capabilities to model human error. In the next sections, extensions to these three classes are presented to afford more fine-grained modeling of actors and modeling of a variety of human errors committed both by end users and system administrators.

4.1 Extensions to Actor

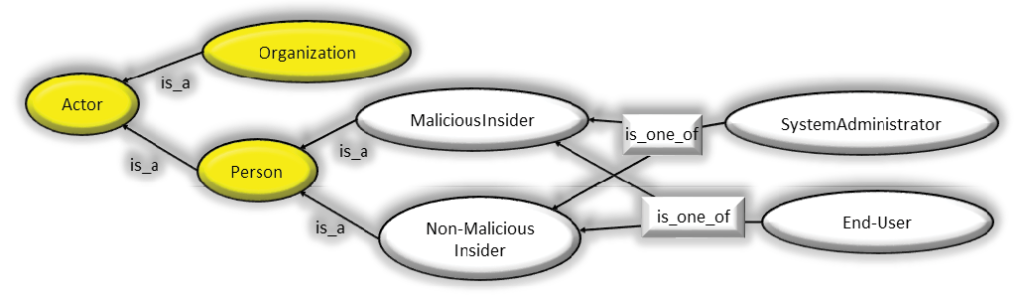

The design decision to have the Actor class have only two subclasses is understandable because the intent of the ontology is to model malicious behaviors by insiders. Consequently, instances of the Person class would typically be the bad actor(s) in the incident. Since it is possible that innocent people might be duped into aiding the bad actor, greater articulation of these classes affords a more fine-grained modeling capability. Figure 1 contains an extended class lattice for the Actor class. The yellow ellipses are classes from the CMU ontology, and the gray ellipses are the extensions proposed here.

Figure 1: The Inheritance Hierarchy for Actor from the CMU Ontology with Extensions for Human Error. – (Source: Author)

As can be seen in Figure 1, a differentiation is made between a malicious actor and one who does not have malicious intent. Additionally, the notion is presented that either people in charge of systems or end users may be malicious or not. These extensions make it possible to provide fine-grained descriptions of human error either on the part of those who are responsible for administering systems or for those who use systems. Note that the is_one_of relationship disambiguates the superclass-subclass relationships, creating a logical or rather than a logical and.

4.2 Extensions to Action

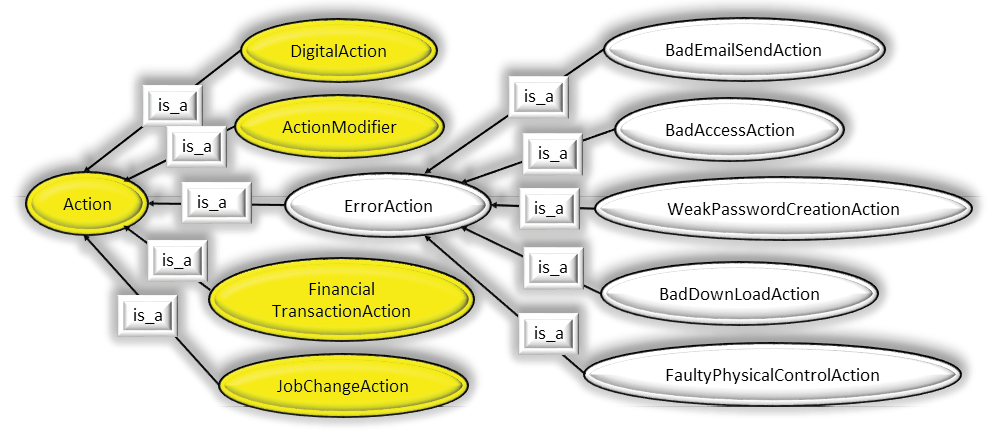

Creating explicit constructs to represent erroneous actions might be achieved in any of several ways. One possibility is to have class ErrorAction as an extension of the ActionModifier. Under this approach, accidentally sending an email with sensitive information to the wrong recipient would involve aDigitalAction. EmailAction modified by ErrorAction. Another alternative is to have ErrorAction as a separate top-level action. Since the other actions are deliberately performed by a malicious actor and ErrorAction instances are not, it is reasonable to model ErrorAction as its own top-level Action. A third alternative is to consider all error actions to be special cases of UnauthorizedAction. The reason for creating the separate higher-level class instead of subclassing UnauthorizedAction is that in the malicious insider taxonomy, an unauthorized action would be deliberate and in the error taxonomy it is explicitly represented to be inadvertent.

Figure 2: The Inheritance Hierarchy for Action from the CMU Ontology, and Extensions for Human Error. – (Source: Author)

An initial set of ErrorAction types has been identified as indicated in Figure 2. These categories were culled from the literature on end user error and do not constitute an exhaustive list. It should be noted that ontological modeling routinely requires multiple inheritance. Some generalization-specialization relationships are excluded from Figure 2. For example, a BadEmailSendAction might be an ErrorAction and a FinancialTransactionAction.

4.3 Extensions to Event

As previously stated, the CMU ontology models eleven events with a clear focus on deliberate, malicious acts. Events include SystemModificationEvent, DataDeletionEvent, FraudEvent, DataExfiltrationEvent, SabotageEvent, TheftEvent, etc. The intent in creating these Event types is clear – they subsume potentially several actions. For instance, a DataExfiltration event might involve copying data into an email and emailing the data to an entity that competes with the victim organization. In order to parallel the morphology of the CMU work, a subsuming notion of an ErrorEvent is created. An ErrorEvent is disjoint from six of the eleven Event categories in the CMU ontology (for instance a MasqueradingEvent which is necessarily deliberate in nature) but overlapping several such as DataDeletionEvent which might be an accidental or malicious event. The fact that such extensions can be seamlessly integrated into the CMU ontology suggests that the ontology is well-structured.

5. An Example Application of the Extended Ontology

The CMU report includes a methodology to model textual descriptions of incidents, and several examples of doing so. Commonly used symbology includes representing classes as labeled yellow ellipses and the use of purple diamonds to represent instances of classes. Arrows are used to represent relationships between classes and instances. The directionality of the arrows removes any ambiguity regarding the nature of the relationships. The scheme that is utilized in the CMU examples is the same as the one produced by the Protégé tool developed at Stanford [22], a tool that supports OWL2 Web Ontology language. The method that is used to create the formalization is algorithmic:

- Identify the main Actors

- Model Actors’ actions

- Establishing basic relationships.

- Model IT Infrastructure

- Connect Infrastructure to Actors

- Model IT actions

The process described in the CMU work was used in the current work. The process is well thought out and logical and its use led to a straight-forward representation of the example case presented next.

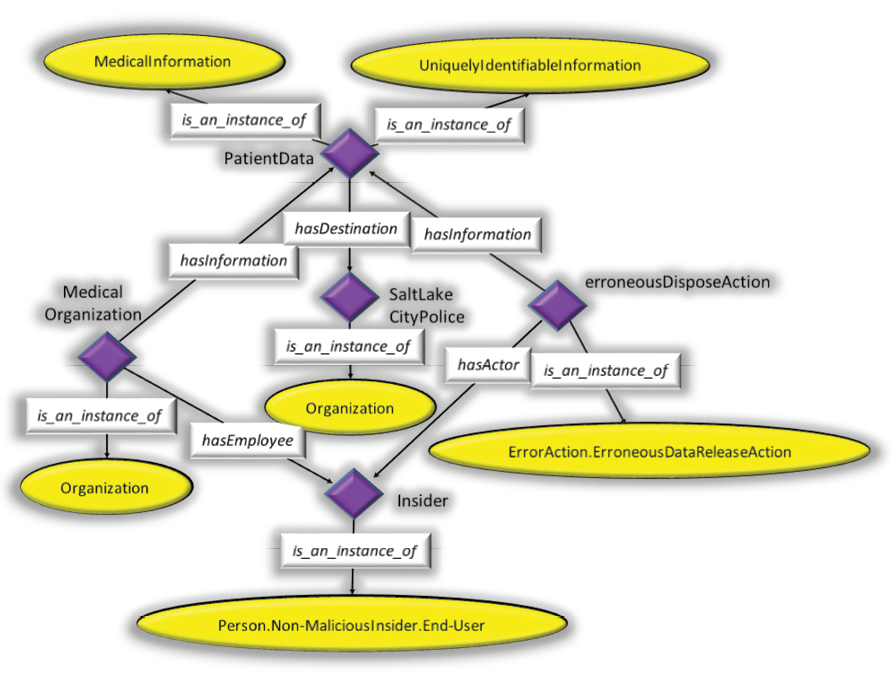

Figure 3: Representation of an End-User Error Using the Extended Ontology. – (Source: Author)

Figure 3 illustrates how a data breach incident is formally modeled utilizing elements of the Insider Threat Ontology created at CMU and proposed extensions to that ontology. As stated, the methodology described in the CMU report was used to create the representation of the case. The representation evolved through multiple steps. As the process is thoroughly described in the CMU document, only the final result is presented rather than the step-by-step evolution of the representation. The following passage, which is represented in Figure 3 using the ontology, was taken verbatim from the Privacy ClearingHouse database [17] that documents 5,000 data breaches.

Names, credit card numbers, Social Security numbers were found in a dumpster. A man was throwing away some stuff in a dumpster and found it was chock full of medical records. “There’s everything in there from canceled checks to routing numbers,” he said. Salt Lake Police packed away perhaps twenty boxes of papers, and said they would protect the documents, as they dug into the matter.

6. Discussion

The base classes of the CMU Insider Threat Ontology provide extensive modeling capability for human actions leading to cybersecurity breaches. The extensions to the malicious insider ontology proposed here proved to be sufficient for the formalization of the case chosen from the Privacy ClearingHouse database. As would commonly occur using the Insider Threat Ontology, the current case illustrates the use of multiple inheritance. In Figure 3, it can be seen that patientData is an instance both of MedicalInformation and of UniquelyIdentifiableInformation, both of which are part of the CMU ontology. This representation was necessary since both patient medical records and social security numbers were compromised in the case being modeled. As discussed before, multiple inheritance schemes are common in ontology construction. The Asset and Information class hierarchies in the CMU ontology are well formed, comprehensive. They were used without modification for the modeling of this particular end user error case.

The model in Figure 3 utilizes a common convention (in the form of a dot notation) for superclass-subclass representation. Some form of representation akin to dot notation is commonly used to provide fully-qualified names in computer programming languages. For instance, the Person. Non-MaliciousInsider.End-User characterization disambiguates the end-user from one who has malicious intent.

7. Conclusions

The authors of the CMU report presenting the Insider Threat Ontology correctly state that the lack of a broadly accepted, standard, formal representation for knowledge pertaining to the field of cybersecurity is a sign of immaturity in the field. Their ontology represents an important step forward in the resolution of this deficiency. The extensions proposed in this article provide a means to model accounts of cases involving human error in addition to deliberate malicious actions. These extensions also permit more detailed characterization of the nature of the actor as a technical person or end user. It is inherently difficult to model the real world formally, and consequently, object modeling is always an iterative process. Most assuredly, additional refinements to the extensions suggested here are called for. Nevertheless, these extensions are a first step toward enabling fine-grained representation of security breaches involving human error.

References

- Costa, D.L., Albrethsen, M.J., Collins, M.L., Perl, S.J., Silowash, G.J., and Spooner, D. An Insider Threat Indicator Ontology. Technical Report CMU/SEI-2016-TR-007. Online. Available: https://resources.sei.cmu.edu/asset_files/TechnicalReport/2016_005_001_454627.pdf.

- IBM Security Services 2014 Cyber Security Intelligence Index. Online. Available: https://media.scmagazine.com/documents/82/ibm_cyber_security_intelligenc_20450.pdf

- F. Howarth. The Role of Human Error in Successful Security Attacks. Online. Available: https://securityintelligence.com/the-role-of-human-error-in-successful-security-attacks/

- A. Glaser. Here’s What We Know about Russia and the DNC Hack. https://www.wired.com/2016/07/heres-know-russia-dnc-hack/

- Verizon. 2013 Data Breach Investigations Report. Online, available: http://www.verizonenterprise.com/resources/reports/rp_data-breach-investigations-report-2013_en_xg.pdf

- Hummer, L. Security Starts with People: Three Steps to Build a Strong Insider Threat Protection Program Online, Available: https://securityintelligence.com/security-starts-with-people-three-steps-to-build-a-strong-insider-threat-protection-program/

- Coffey, J. W., Baskin, A. and Snider, D. Knowledge Elicitation and Conceptual Modeling to Foster Security and Trust in SOA System Evolution. In El-Sheikh, E., Zimmerman, A., and Jain, L. (Eds), Emerging Trends in the Evolution of Service Oriented and Enterprise Architectures. 2016. Springer.

- J. Grunzweig. Understanding and Preventing Point of Sale Attacks. Online. Available: http://researchcenter.paloaltonetworks.com/2015/10/understanding-and-preventing-point-of-sale-attacks/

- A. Hern. What is Dridex, and how can I stay safe? Online, Available: https://www.theguardian.com/technology/2015/oct/14/what-is-dridex-how-can-i-stay-safe

- T. Armerdeing. Security training is lacking: Here are tips on how to do it better. Online, Available: http://www.csoonline.com/article/2362793/security-leadership/security-training-is-lacking-here-are-tips-on-how-to-do-it-better.html

- Gruber, T.R. The role of common ontology in achieving sharable, reusable knowledge bases. In Allen, J. A., Fikes, R., and Sandewall, E. (Eds.) Principles of Knowledge Representation and Reasoning. Proceedings of the Second International Conference. San Mateo, CA: Morgan Kaufmann, 1991.

- W3C. OWL 2 Web Ontology Language Structural Specification and Functional-Style Syntax (Second Edition). Online, Available: https://www.w3.org/TR/owl2-syntax/

- Novak, J.D. and Gowin. Learning How to Learn.

- Peterson, E. SpaceTime Ontology. Online. Available: http://semanic.org/Ontology/OntDef/Cur/SpaceTime.owl

- Mundie, D. How Ontologies can Help Build a Science of CyberSecurity. Online, Available: https://insights.sei.cmu.edu/insider-threat/2013/03/how-ontologies-can-help-build-a-science-of-cybersecurity.html

- Mundie, D., and McIntire, D. The Mal: A Malware Analysis Lexicon. Online, Available: http://resources.sei.cmu.edu/library/asset-view.cfm?assetID=40240

- Privacy Rights Clearinghouse. Data Breaches. Online. Available: https://www.privacyrights.org/data-breaches

- Kharif, O. 2016 Was a Record Year for Data Breaches. Online. Available: https://www.bloomberg.com/news/articles/2017-01-19/data-breaches-hit-record-in-2016-as-dnc-wendy-s-co-hacked

- ITRC. The Identity Theft Resource Center. Online. Available: http://www.idtheftcenter.org/

- APWG. APWG: Unifying the Global Response to Cybercrime. Online, Available: http://www.antiphishing.org/

- W. R. Daughterty. Human Error Is to Blame for Most Breaches. Online, Available: http://www.cybersecuritytrend.com/topics/cyber-security/articles/421821-human-error-to-blame-most-breaches.htm

- Protégé. What is an Ontology? Building and Inference Using The Stanford Protege tool Part I. Online. Available: https://www.youtube.com/watch?v=1IQScWqzbPw

- System Administrators are Users Too: Designing Workspaces for Managing Internet-Scale Systems. Proceedings of CHI2003. pp 1068- 1069. April 5-10, 2003, Ft. Lauderdale, Florida, USA. ACM 1-58113-637-4/03/0004.

- Fulp, E. W., Gage, D., John, D., McNiece, M., Turkett, W., and Zhou, X. An Evolutionary Strategy for Resilient Cyber Defense. Procedings of the 2015 IEEE Global Communications Conference. DOI: 10.1109/GLOCOM.2015.7417814